The Child Mind Institute built Mirror, a digital journaling app, with a specific ethical goal in mind. Rather than creating another mental health app, the team prioritized responsible AI design that treats vulnerable disclosures as a pathway to human connection, not replacement for it.

Mirror asks a fundamental question that guides its development: when young people share their most private thoughts with an algorithm, how can the technology actually help them? This framing moves beyond collecting data or providing automated responses. It recognizes that algorithmic analysis of sensitive content requires guardrails.

The Child Mind Institute's approach reflects growing awareness among child mental health experts that AI tools for teens need intentional safeguards. Young people already use digital journaling and mood-tracking apps at high rates. Research from the American Psychological Association shows adolescents value privacy and authenticity when sharing about mental health. They're also skeptical of surveillance.

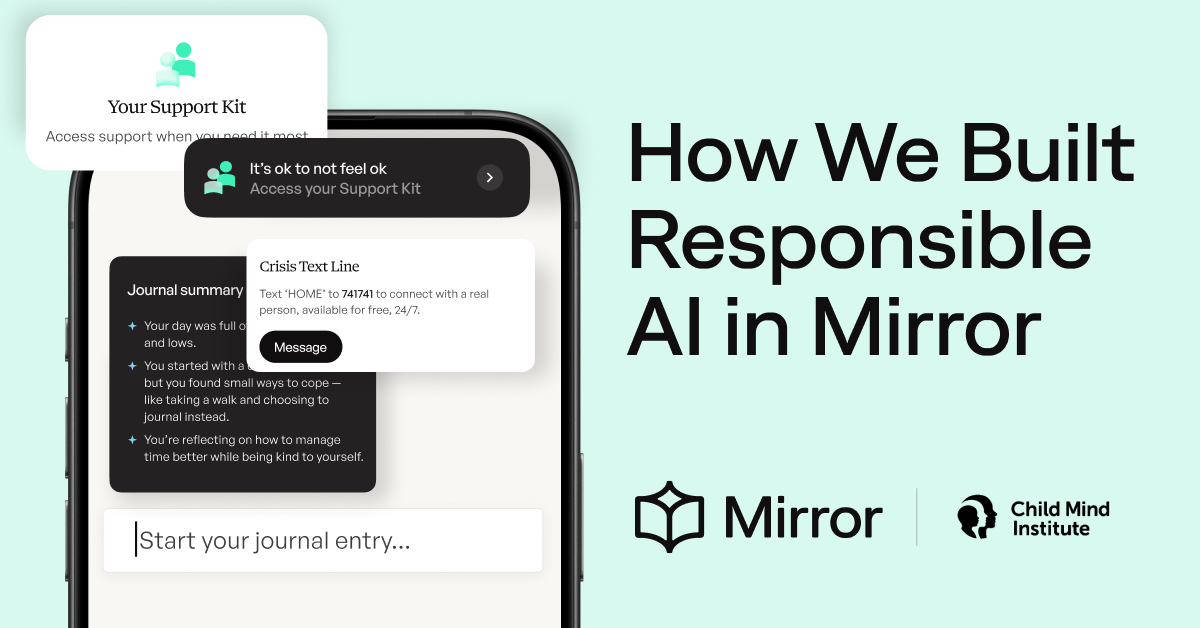

Mirror's design philosophy treats the algorithm as a support tool that encourages connection with trusted adults, therapists, or counselors. Rather than positioning AI as the solution, the app uses technology to help young people articulate their thoughts clearly. The technology then facilitates better conversations with real humans who can provide clinical judgment and relational support.

This matters because teens struggling with anxiety, depression, or other challenges often share things with digital tools they won't say aloud. An irresponsible app might use that data for targeted ads, sell insights to third parties, or deliver generic responses that feel hollow. Mirror's framework asks developers to see vulnerable disclosures as sacred ground requiring extra thoughtfulness.

Parents considering mental health apps for their teens should ask similar questions. Does the app position itself as replacing professional help or supporting it? What happens to entries your teen writes? Does the company sell data? Can you access what your teen is sharing if you're concerned?

The Child Mind Institute's approach suggests responsible AI in mental health apps combines technical capability with